The Internet contains a ton of great websites as useful resources. These websites make you more productive, knowledgeable and helpful to learn new skills. Wikipedia is one of them. If you want to check for any information Wikipedia you need an active Internet connection is must to fetch data on your device.

If the Internet is not available or if you are living in a remote location then fetching the website regularly is not possible. However, there is a way for in these kinds of situations. Downloading a complete copy of the website. Yes, you read it correctly. You can download an entire website for offline use.

It is easier than you think and you don’t need to download every webpage manually. There are some tools available to download a website completely to store as a local copy.

1. WebCopy

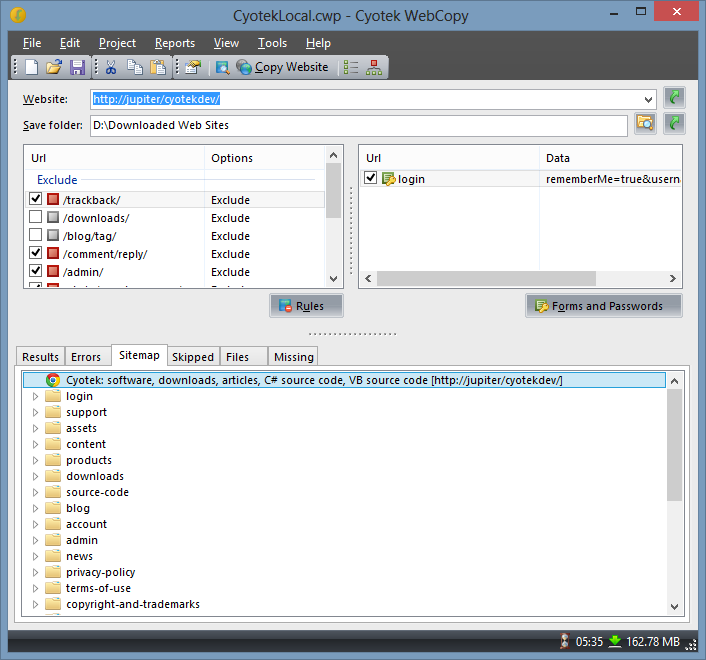

WebCopy is developed by Cuotek company. It is a very popular name among the website downloaders. It deeply scans the website and downloads each and every URL webpages along with media files. If you want only webpages you can configure the downloading preferences. It is one of the best tools to make an offline copy of the website on Windows.

To download a website using WebCopy follow the below-given procedure.

- Download WebCopy program from here and install it.

- Open the program and navigate to File -> New to create a new project.

- Type the URL of the website you want to download.

- You can customize the destination folder if you want to save on the other parts of the hard disk.

- Here, you can tweak the setting by heading to Project -> Rules.

- Navigate to File -> Save As to save the project.

- Click Copy Website in the toolbar to start the process.

2. HTTrack

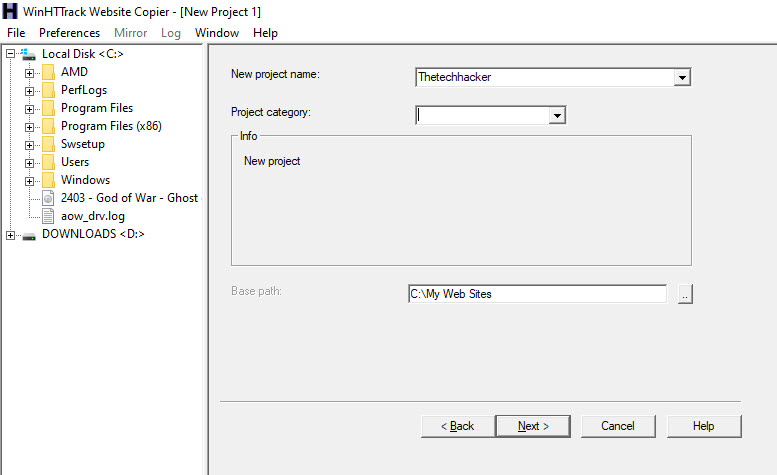

HTTrack is a free easy to use website downloader. It is completely open-source and available for Windows, Linux, and Android platforms.

It works just like WebCopy and lets you download a website fully on a local directory. To download a full copy of the website locally with HTTrack follow the below steps.

- Download the HTTrack program from here and install it.

- Click on Next to begin creating a new project.

- Type the project name, category, installation path and then click next.

- Select Download web site and then type website URL in the Web Addresses box.

- You can also save URLs in a TXT file and import it. It is useful to import websites if you want to re-download the same sites.

- Adjust parameters and then click Finish.

Conclusion

These two programs are the best performing website grabbers without any hassle. Make sure that every program takes some time to download the entire website depending on its size. As the size of the website is big, the program takes more time to download.

Which tool you are tested and what is your opinion about it? Let us know in the comment section below.

Leave a Reply